The Olive Tree is Coming for AI

~1,050 words. 5 minute read.

In 1999, Thomas Friedman boarded a bullet train in Japan. At 180 miles per hour, he glanced at the newspaper in his lap, and had a Pulitzer Prize-worthy insight. He was reading about Palestinians and Israelis fighting over who owned which olive tree (not much has changed about that in 27 years ☹). He had visited a Lexus factory earlier that morning — all precision and efficiency; robotic arms were doing in seconds what craftsmen once did in hours.

Tom Friedman’s Pulitzer moment.

Friedman broke it down like this: The Lexus represented globalization, progress, modernization. The olive tree represented roots and identity. Friedman's argument wasn't that one would win. It was that the tension between them was the defining drama of the era.

Here we go again.

The Lexus has a new name

The current global race to adopt, deploy, and integrate artificial intelligence is the most Lexus moment humanity has experienced since, well, the Lexus. Speed. Efficiency. Scale. The obliteration of friction. Every industry, every boardroom, every government — all racing to figure out how to put AI into everything before someone else does it first. (Oh, and being able to say: “Y’all’s jobs won’t even be around in 5 years.”)

Globalization involved the inexorable integration of markets, nations, and technologies — in a way that was enabling individuals, corporations, and nation-states to reach around the world farther, faster, deeper, and cheaper than ever before, and in a way that was also producing a powerful counterforce.

Swap ‘globalization’ for ‘AI-ization’ and you have today's headline. Every word of it fits.

The key in Friedman’s understanding was this: The counterforce isn't a bug. It's a feature. It's not irrational resistance from people who don't understand the technology. It's the olive tree reasserting itself. Culture. Tradition. Identity. And in this context, the irreplaceable texture of being human. Friedman documented the ‘backlash’ against globalization and called it inevitable. I prefer ‘counterforce’ to ‘backlash’, given that by and large, it’s accepted that the AI-Genie is out of the bottle and ‘being against it’ is akin to being against the sun rising – it’ll happen regardless.

You Will Pay a Premium to Talk to a Human

There's a concept economists talk about quietly — the premium of the human touch. Note that the more expensive the restaurant, the less likely it is to have automation via tablets or QR codes. At fine dining restaurants, the number of people serving you can rise along with the added work they do. The human touch is a specific and valued characteristic of the service. One of my own standout dining experiences at L’Abeille in Paris featured a bread sommelier, a water sommelier, a sommelier sommelier, and a number of waiters.

This isn't sentiment. It's a market signal.

The counterforce at work.

When everything can be generated, replicated, and automated, the rarest commodity becomes authentic human thinking — the kind that carries texture, perspective, and the irreplaceable fingerprints of lived experience. Today, more than 40% of consumers don't trust AI-generated content, especially when it's not clearly labeled. Meanwhile, 79% say a human understands them better than AI ever could (whether that’s true or not).

This may sound like a pre-mature statement, but as almost everything with AI, it is not: We are moving, faster than we realize, into a world where the most luxurious thing you can offer someone is a purely human experience. Not better-than-human. Not AI-assisted. Just... human. The consultant who went through the same thing you're facing and made real mistakes trying to solve it. The teacher who notices that the kid in the back row has gone quiet this week. The financial advisor who has managed your family finances for decades.

The diversity and nuance in the human condition and experience can make things hard for a probabilistic model – see last week’s post.

The Lexus Factory produces Lexuses at scale. The olive tree produces exactly one olive at a time, on exactly that branch, in exactly that village. That's the point.

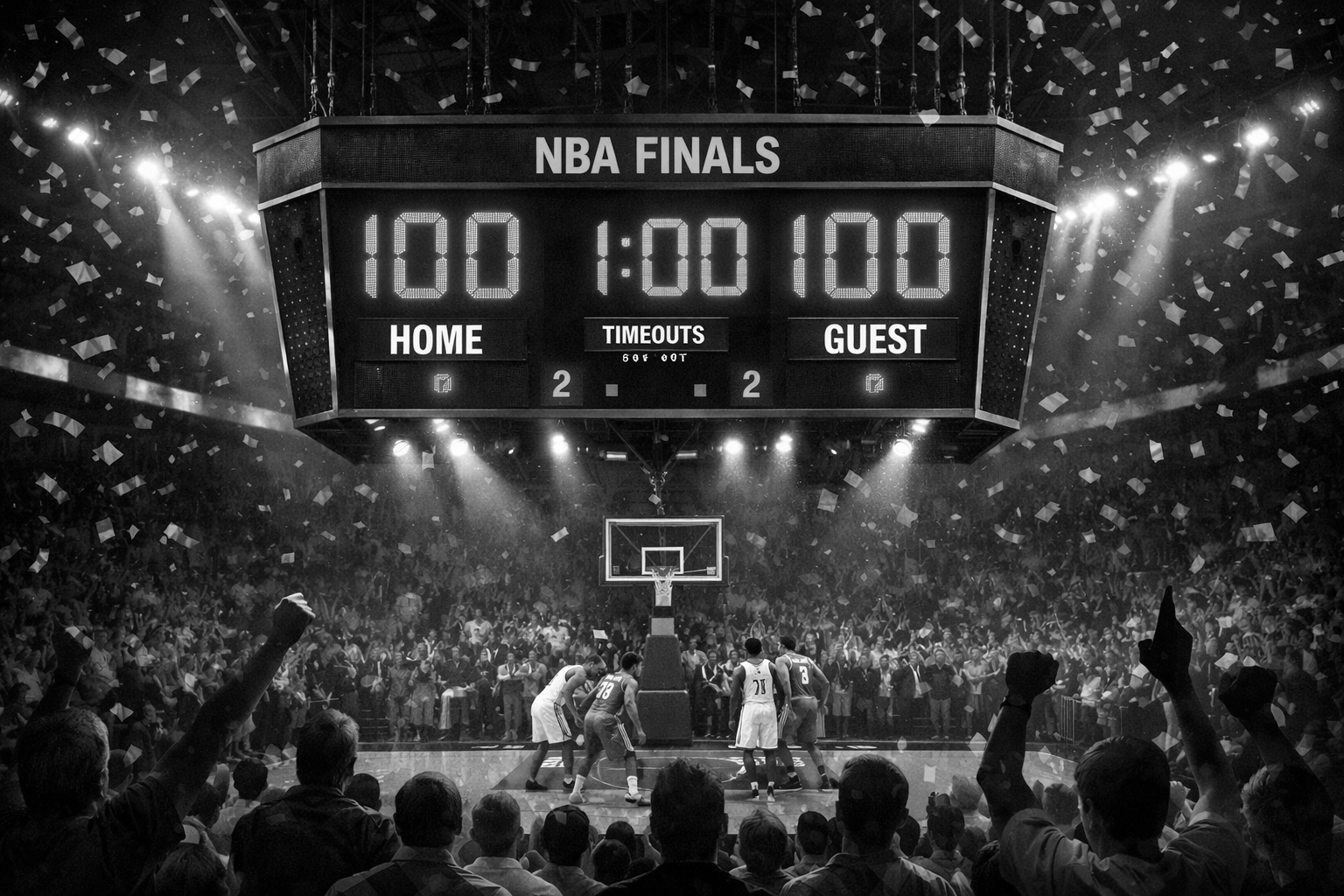

The NBA Finals Problem

Let me offer you a thought experiment to illustrate all of this:

In the NBA Finals, almost every game comes down to the final minute. Sometimes the final ten seconds. The lead changes. The foul is called. The three-pointer goes in or it doesn't. The crowd holds its collective breath.

Knowing this, why don't we just put 100 points on the board for each team, set the clock to 60 seconds, and play it out from there? We know that's where it gets decided. We know the rest is just — economically speaking — the long road to the interesting part. Why not skip it?

Of course, you already know the answer.

Is this really all we need?

You can't understand the final minute if you didn't live through the first three quarters + 11 minutes of the fourth. The tension in that last minute exists because of everything that came before it. The comeback from 18 points down. The star player who fouled out in the third quarter. The rookie who suddenly had to carry more than they were supposed to. The final minute is downstream of everything that happened before it and meaningless without it.

We need the story. We need to know how we got there. We need human pace.

This is the backstop against unbounded AI adoption, and it's more powerful than any regulation, any ethics committee, any corporate AI policy. AI may automate codified knowledge, but not the tacit knowledge that stems from experience. The distinction matters: Tacit knowledge isn't just a different kind of data point. It's information that can only be acquired the long way. By seeing the whole basketball game.

AI operates at lightning speed and wants to hand you the outcome. Humans need the journey to make sense of the outcome. Those two things are not reconcilable by better prompting (ironically, it may be poor prompting and the iterations needed to get to a desired output that offers a better approximation).

The coming groundswell

Friedman described two forces in the globalization era: the backlash (read counterforce) against the system or technology, and what he called the groundswell — the force demanding its benefits. Both existed simultaneously.

We're about to see the same dynamic with AI. The counterforce manifests in consumers consciously paying more for a therapy session with an actual therapist. Financial services firms offering human advice (maybe AI-enabled, but still delivered by a human). Parents who decide their kids' school needs a philosophy of no AI in the classroom, ever. Students enrolling in humanities vs. STEM, because they need to rediscover human outcomes, even when interacting with AI.

Every major technological shift has reshaped the skill mix by moving people up the value chain. As automation takes on certain tasks, demand is growing for distinctly human skills — judgment, communication, sensemaking, and contextual understanding.

The olive tree has been here for thousands of years. It was here before the Lexus. It was here before Friedman got on that Shinkansen. It will be here after the current AI gold rush loses steam and we start asking what we actually gave up.

The question isn't whether the counterforce is coming. The question is whether you're building something that survives it — or something that becomes its symbol.

The Lexus and the Olive Tree. Again. Still. Always.